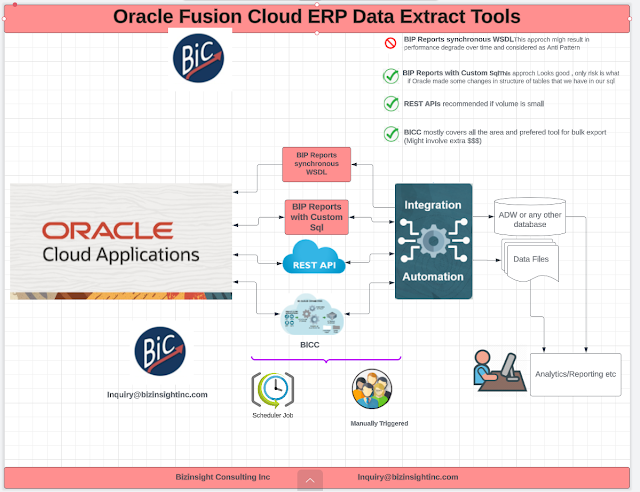

Oracle Fusion Cloud Data Extraction Tools

Data Extraction from cloud is always a big Challenge . With Oracle cloud fusion , depending on the use case and the destination for that data, we have many options. In the blog I will list some of these options/tools ( especially the one that I usually used in implementations)

Feel free to reach us for additional details (inquiry@bizinsightinc.com)

Business Intelligence Cloud Connect (BICC) :

Oracle BICC, or Oracle Business Intelligence Cloud Connector, is a cloud-based tool that helps organizations extract data from Oracle Fusion Applications and load it into a variety of data warehouses and business intelligence platforms. If you want to extract medium to high data volumes to a data warehouse/Data Mart, Business Intelligence Cloud Connect (BICC) is the recommended tool.

BICC is included in the Oracle Applications Cloud subscription. While implementing BICC execute an initial data extract to do heavy lifting , followed by regular incremental data extracts based on pre-defined BICC Data Stores for Oracle Cloud ERP.

After you sign into BICC, you can select the offerings from which to extract data, and also specify whether you want to store the extracts on a dedicated external cloud storage or load them into Oracle Universal Content Management (UCM). The extracted data is secure because the security standards applied to Oracle Applications Cloud extend to BICC as well. Extracts run faster depending on what you extract and how. Once extracted data is in external storage or UCM , we can designed OIC jobs to extract data from Oracle OCI storage bucket or from UCM server.

Let's look at what you can do with BICC to extract data efficiently from the products available in your implementation:

This is how a typical workflow for BICC looks like

- Provision your BICC account. BICC is available as part of the Oracle Applications Cloud subscription, additional access provisioning is required and you must sign into BICC to perform all tasks related to the data extraction..

- Review and understand how the BI view objects and database lineages are mapped. Note : Before you extract data, you must know the BI View Objects (VOs) and their attributes , It is very important that you have understanding of mapping of VOs to the corresponding database tables and columns . Good understanding of BIVOs of BICC and underlying Fusion table will help you to design your extract for your business needs.

- Select required offerings and select the associated BIVOs.

- Select a storage location for the cloud extract files , I usually prefer UCM.

- Create an extract schedule or run data extract for the selected BIVOs.

- You can create and run multiple extract schedules and data extracts.

- You can view last cloud extract and check error messages in the case of failures. You can check scheduled jobs’ logs to review issues causing errors and get support to resolve them.

- Download the extracted files from the configured storage location.

Some customers try to access BIP via a synchronous SOAP service and execute a BIP report to get data out of Fusion Applications as part of there data extraction jobs. The response payload is a base64 data set and is usually then decoded by client code. Accessing BIP report via a synchronous SOAP API has many issues , It might work well during the development phase , but during the UAT or Production if user triggered 7-10 concurrent request that are using BIP over synchronous SOAP api , it results in performance degradation and huge bottle neck .This is SOMETHING you don’t want to design /develop or Implement

Another Flavor of BIP is , Design and develop ESS job on top of your BIP report and call that from OIC, that works well and we have implemented in many times ( please note that this is for Incremental Load and not for Initial Load) .Below are the steps for to implement BIP/ESS for Incremental extractions.

This is how a typical workflow for BIP with ESS looks like

2.Create ESS Job for your BIP Report

3.Design OIC flow ( flow #1) to call ESS Job

4.Design OIC flow ( flow #2) , Flow #2 will subscribe to ERP outbound cloud event and keep listing for ESS job events ,

In the flow #2 , read the File extracted by ESS job and load in Target system ( table or data files).

Note - As I have said earlier too , this is very quick and lean process and work well for Incremental load . While reading the data file if your data file ( generated by ESS) is < 10B then use STAGE FILE component of OIC , but if file size is > 10MB then use FTP adapter .

Additional details

- User will Submit OIC flow #1 , that in turns triggered ESS job.

- Once ESS completed , it triggered ERP Outbound events

- OIC Flow #2 catches the event and Read the data extracted by ESS job and load in target tables/files

REST APIs

- REST apis works well for Incremental Load

- These are ideal for transactional operations where you are querying small amounts of data (e.g. <200 rows) from Fusion Applications

- They are used extensively by customers as the backend to many new user interfaces (

- The REST APIs are designed for synchronous operations and highly scale-able